Unified Data Security Platform

Protect sensitive data and control access at scale.

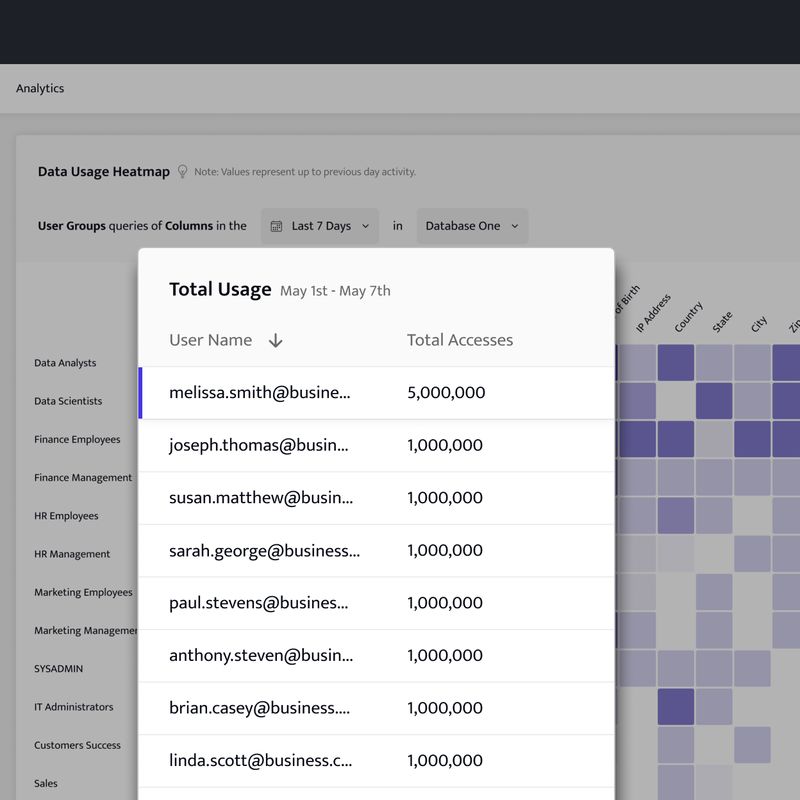

Database Activity Monitoring

Easily monitor data access and detect abnormal queries using our analytics and query audit logs. Enable alerts to allow security teams to quickly investigate any suspicious activity.

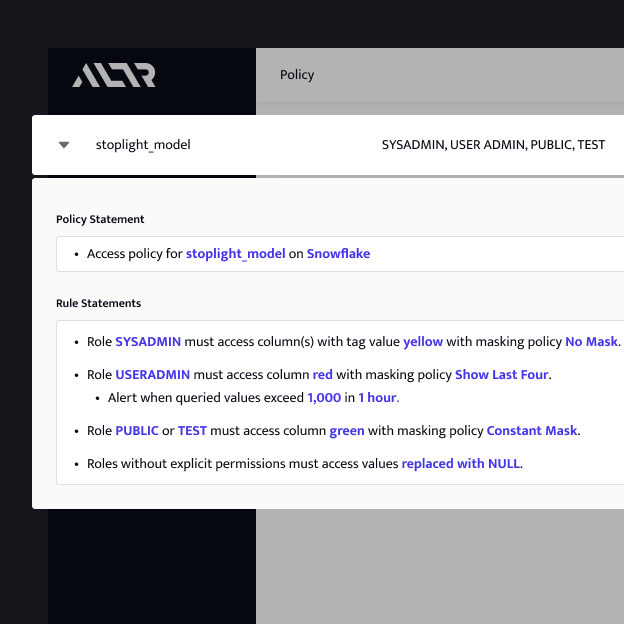

Dynamic Data Masking

Protect data through classification and dynamic data masking before it enters your cloud data warehouse. Seamlessly integrate masking with your data catalog or ETL/ELT pipeline.

Sensitive Data Protection

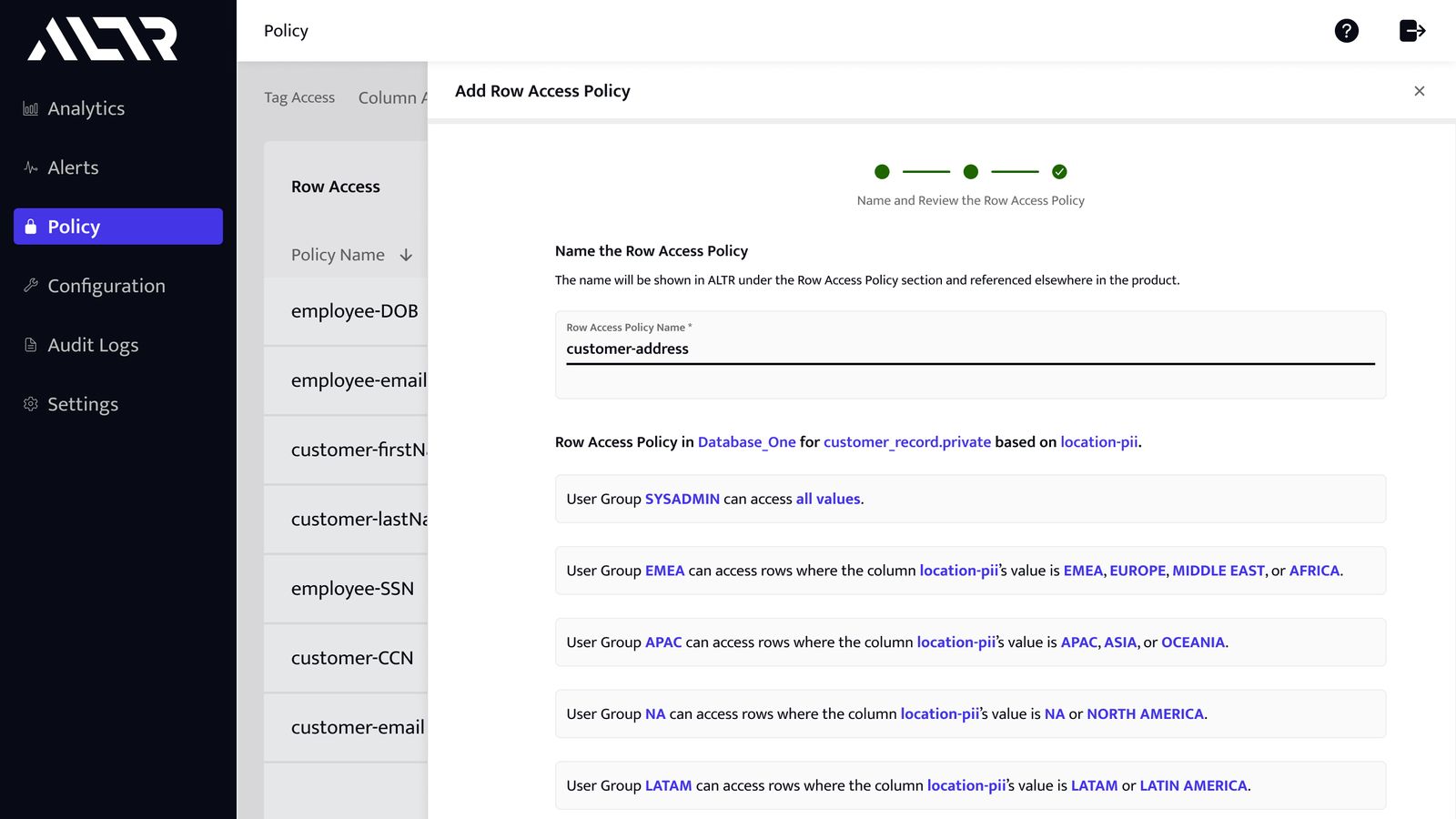

Secure highly sensitive data like PHI, PCI, and PII data from privileged access with advanced data protection and automated access policies.

Integrate with Data Catalogs

ALTR's Data Security Platform seamlessly integrates with data catalogs. Define and enforce security and policy in our platform while leveraging your existing tools.

FEATURES

One powerful platform for your

Dynamic Data Masking

Database Activity Monitoring

Data Classification

Tokenization

Open Source Integrations

Format Preserving Encryption

Increase Efficiency

Data teams get real-time access to sensitive data without risk while security teams gain full visibility over sensitive data. All data functions are fully aligned.

Reduce Complexity

Non-technical users can implement policy and simplify ownership so data can remain streamlined and automated.

Manage Risk

Protection for at rest, in motion, and in use data. Remove the risk of access threats by extending governance and security upstream and to the left.

“Through their native cloud integration, ALTR’s visibility into Snowflake Data Cloud activity is providing a solution for customers who need to defend against security threats.”

Omer Singer

Head of Cybersecurity Strategy, Snowflake